AI User Testing vs Traditional: Which One Wins in 2026?

TL;DR

AI user testing uses real human participants moderated by AI, not synthetic personas. Traditional testing uses a human researcher to moderate and analyze. Both produce real insights from real users.

AI testing delivers results in 24–48 hours. Traditional moderated testing takes 2–4 weeks. For sprint-based teams, that gap is the difference between evidence-based decisions and gut calls.

AI wins on speed, cost, scale, frequency, and global reach. Traditional wins on interpretive depth, emotional nuance, and open-ended discovery research.

AI user testing is not the same as unmoderated testing. The AI actively probes and adapts in real time, giving it the depth of moderated research at the speed and scale of unmoderated.

There's a problem most product teams know but rarely say out loud: research keeps arriving after the decision has already been made.

The sprint closed. The roadmap got locked. The feature shipped. And the user testing report landed in your inbox three weeks later, full of insights nobody can act on anymore.

This is the core tension in 2026. Building has gotten dramatically faster, but the feedback loop hasn't kept up.

AI user testing now wins for speed, scale, and continuous product development. Traditional testing still wins in high-depth, exploratory research where human judgment is critical.

If you're new to the space, start with a complete breakdown of AI User Testing: The Complete Guide for Product Teams (2026) or understand the fundamentals in What is User Testing: Definition, Best Practices & Use Cases.

What Is Traditional User Testing?

Traditional user testing is a research method where a human researcher recruits real participants, moderates or observes them completing tasks with a product, and manually analyzes recordings and notes to surface usability insights. It includes moderated sessions (with a live facilitator), unmoderated sessions (self-guided with recording), and remote or in-person formats.

Traditional user testing has been the backbone of product research for decades and for good reason. Done well, it produces a quality of insight that remains genuinely hard to replicate. A skilled human researcher catches tone of voice, hesitation that doesn't get verbalized, and conversational threads that weren't in the discussion guide but turn out to be the most valuable part of the session.

The standard process looks like this: a researcher writes a test plan and task scenarios, gets stakeholder sign-off, recruits and screens participants, runs sessions (moderated or unmoderated), reviews recordings, codes themes, and produces a synthesis report.

The 3 Core Formats

- Moderated in-person: Researcher and participant in the same room. Richest data, highest cost, slowest to scale.

- Moderated remote: Live session over video. Strong depth, more accessible, and still requires scheduling.

- Unmoderated remote: Participants complete tasks independently with recording tools. Faster, scalable, but shallower "why" data.

Where Traditional Testing Breaks Down in 2026

The problem isn't quality, it's structural. Traditional testing was designed for a product development pace measured in months. Four specific constraints make it hard to sustain at modern speeds:

- Timeline: A 20-participant moderated study takes 2–4 weeks from kickoff to report. By the time findings land, the sprint has closed, and the decision has been made on gut instinct anyway.

- Cost: Enterprise platforms cost $50,000–$200,000+ annually. Individual moderated sessions run $100–$150+ each, plus researcher time. This keeps testing centralized in dedicated research teams and inaccessible to the wider product team.

- Scale: Every additional session requires more moderator hours and more analysis time. You can't run 50 moderated sessions in a week without a full research team dedicated to nothing else.

- Professional testers: Large traditional panels carry a real risk of recruiting experienced research participants who've learned to behave differently from genuine first-time users. Their feedback can skew in ways that don't reflect your actual audience.

What Is AI User Testing?

AI user testing is a research method that uses real human participants moderated by artificial intelligence. The AI guides sessions in real time, asks adaptive follow-up questions based on what participants say and do, and automatically analyzes recordings to surface behavioral patterns and usability insights without requiring manual moderation or video review.

The most important thing to understand: real humans give feedback. The AI is the moderator and analyst, not a replacement for the people whose opinions and behaviors actually matter.

What makes AI moderation meaningfully different from simply unmoderated testing is that the AI doesn't just run a fixed script. It listens, adapts, and probes. When a participant hesitates on a step, the AI asks why. When they express confusion, it follows up. When they complete a task in an unexpected way, it notices. This is the depth of moderated research delivered at the speed and scale of unmoderated.

Important Distinction

AI user testing is not the same as synthetic user testing. Synthetic testing uses AI personas to simulate participant responses entirely, no real humans involved. AI user testing uses real participants; the AI handles moderation and analysis. For decisions that affect real users, AI user testing (real people + AI infrastructure) is the relevant comparison to traditional methods. Synthetic testing is a separate category with its own use cases and limitations.

Read Synthetic User Testing: Guide for Product Teams and UX Researchers for a full breakdown.

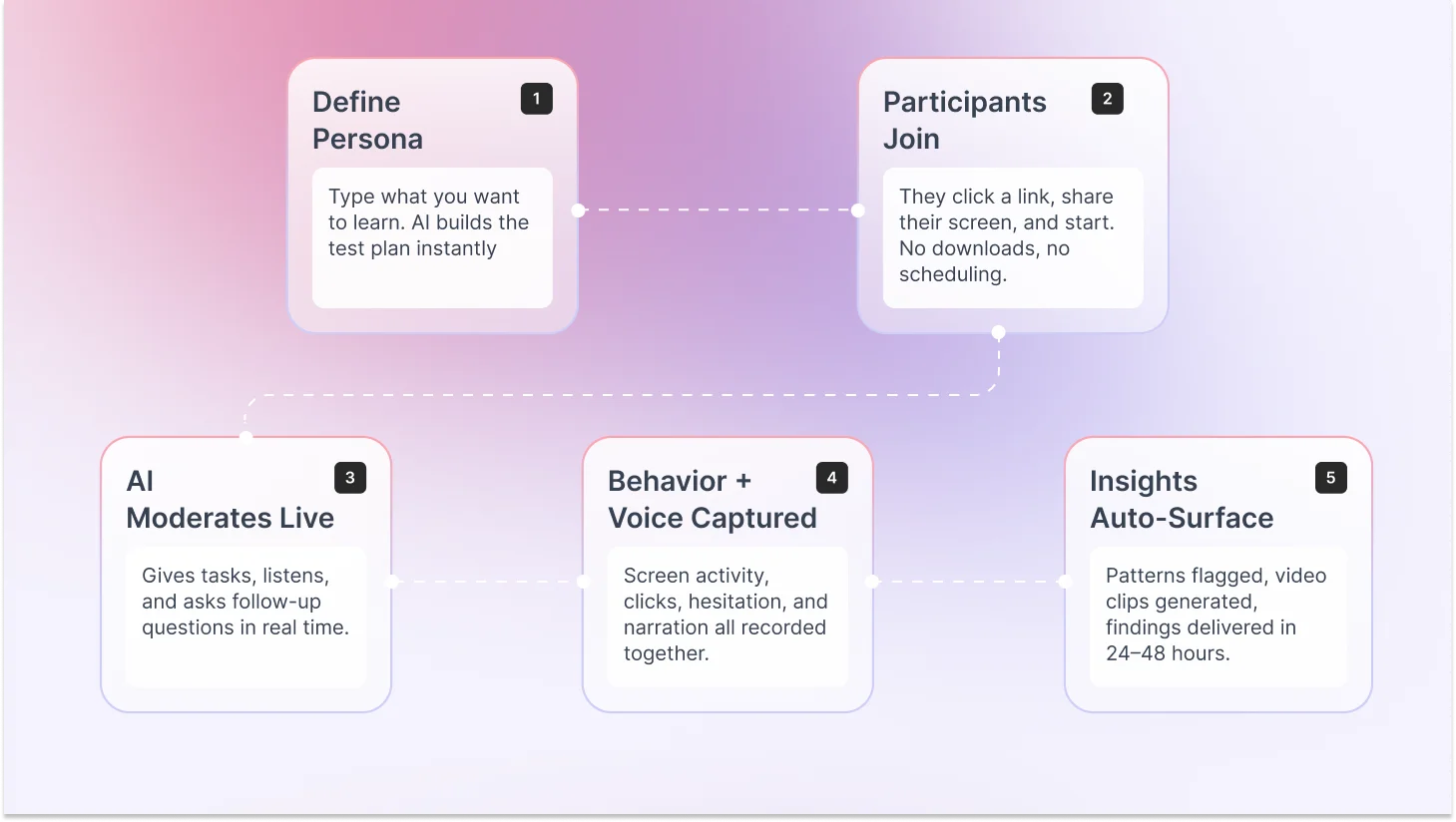

How Does AI User Testing Work Step by Step?

Here's what actually happens in an AI user testing session:

1. You describe what you want to learn

You enter a research goal, "I want to understand if new users can complete onboarding without dropping off," and the platform's AI builds a test plan: tasks, follow-up logic, and success criteria. This takes minutes, not days.

2. Participants join and share their screen

Participants receive a link, click to join, grant screen recording permission, and begin. No downloads, no scheduling, no researcher coordination. Sessions run in parallel across all participants simultaneously.

3. The AI moderates in real time

The AI gives tasks, listens to participant narration, observes screen behavior, and asks adaptive follow-up questions based on what's happening. When someone says, "This is confusing," the AI probes: "What specifically is confusing about this step?" It follows the session, not a script.

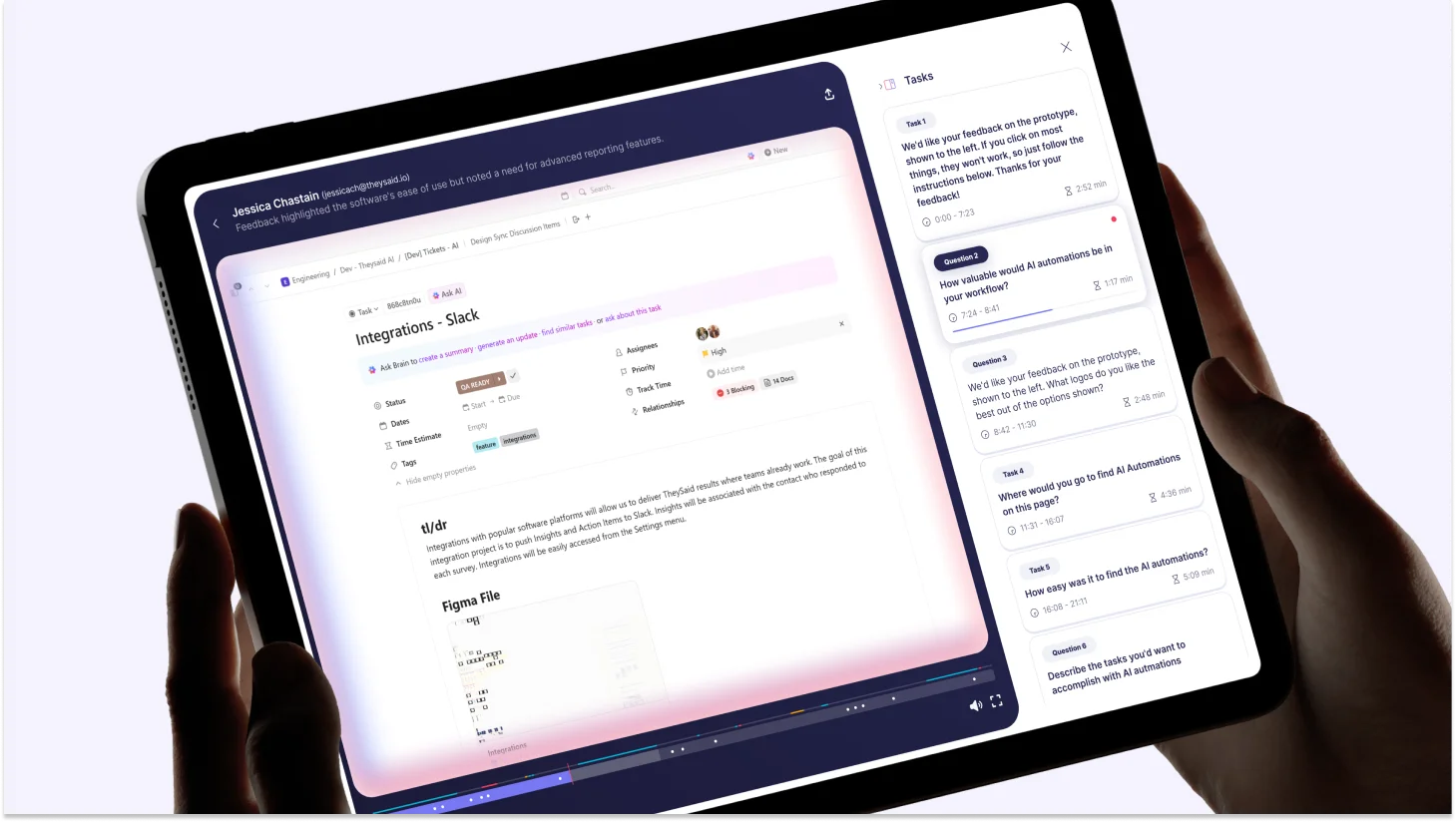

4. Behavioral and verbal data are captured together

Screen activity, voice narration, hesitation points, task completion times, and click behavior are all recorded and linked. The AI isn't working from a transcript alone; it sees what users do, not just what they say.

5. Insights surface automatically when sessions complete

The AI groups patterns across all participants, flags recurring friction points, generates timestamped video clips of key moments, calculates task completion rates, and delivers prioritized findings. No one watches 20 hours of recordings. Results are typically ready within 24–48 hours of launch.

Recommended read: User Testing Process: Step-by-Step Guide for Product Teams (2026).

AI User Testing vs Traditional User Testing: Quick Comparison

On that last point, researcher bias is one of the less-discussed advantages of AI moderation, and one that practitioners raise consistently:

What Does Each Role Actually Get From AI User Testing?

Product Managers: Validate Before You Commit

The PM's research problem is timing. Findings that arrive after sprint planning are interesting but not actionable. AI testing changes that.

- Launch a 15-participant study before your next roadmap review, not after

- Test onboarding assumptions before scaling a feature

- Get pre-launch validation in 48 hours, not 3 weeks

- Run your own studies without waiting on research team capacity

UX Researchers: Do the Work Only You Can Do

AI doesn't take your job. It takes the parts of your job that don't require your expertise, freeing you for the work that does.

- Stop watching 40 hours of recordings, AI surfaces key moments

- Run more studies at a higher frequency without burning out

- Focus on research design, interpretation, and stakeholder influence

- Extend research capacity to teams that previously couldn't access it

Product Designers: Test at the Speed You Design

The designer's problem is cadence. Research timelines outlast design sprints. AI testing closes that gap.

- Test a Figma prototype on Monday, get findings by Wednesday

- Validate iterations before handing to engineering, not after

- Catch friction in onboarding flows when they're cheap to fix

- Build stakeholder confidence with real user evidence

5 Real Scenarios: Which Approach Should You Choose?

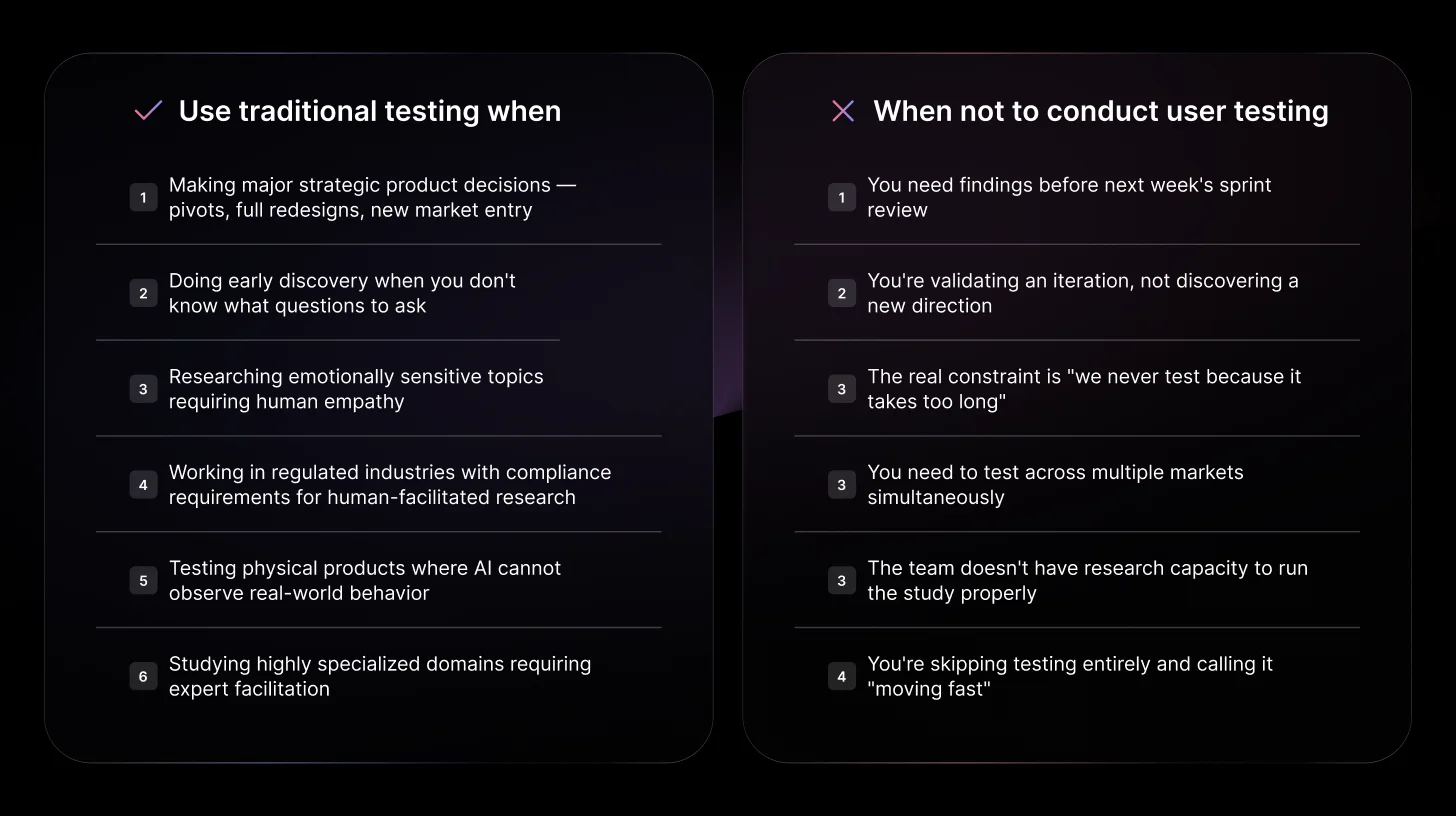

Scenario 1: Pre-launch — you're shipping in 5 days and need usability validation

Choose AI User Testing

Your team ships in five days. You need to know if new users can complete the core onboarding flow without dropping off. Traditional testing would take three weeks. AI user testing lets you launch a 20-participant session today and have behavioral data and friction points by tomorrow morning, with enough time to fix what's broken before launch.

Read: What Is Prototype Testing? Definition, Types & Step-by-Step Process

Scenario 2: Major product pivot — you're rethinking the core value proposition

Choose Traditional Testing

You're considering a fundamental shift in how your product works. This is a half-million-dollar engineering decision. You need deep, interpretive research not just what users do, but why they make the decisions they make, what mental models they bring, and what they're not saying out loud. Invest in a skilled researcher running moderated sessions. The three-week timeline is worth it for a decision of this magnitude.

Scenario 3: Same feature launching across 5 global markets simultaneously

Choose AI User Testing

Your product launches in the US, UK, Germany, Brazil, and Japan in the same quarter. Running five parallel traditional research programs would take months and require local moderators in each market. AI user testing runs all five simultaneously in native languages, with consistent methodology, delivering comparable cross-market insights within 48 hours.

Scenario 4: Early discovery — you're entering a market you've never built for

Choose Traditional Testing

You're building for a user segment you don't understand yet. You need to explore their mental models, existing workflows, and the problems they're actually trying to solve, not the ones you've assumed. A skilled researcher running open-ended discovery interviews will follow unexpected threads that a structured AI session may not pursue. Human judgment here is irreplaceable.

Scenario 5: Sprint-by-sprint iteration — you ship weekly and never have time to test

Choose AI User Testing

Your team ships every week. You've updated the checkout flow, the dashboard empty state, and the notification settings across the last three sprints, and you have no validated data on any of them. AI user testing makes it realistic to run a 15-participant validation every sprint: same-day launch, findings the next morning. Over a quarter, that's 12 validated data points you'd otherwise never have.

When Traditional User Testing Is Still the Right Call

AI testing isn't the right answer for every situation. Here are the six cases where traditional methods genuinely win, and a warning about when teams mistakenly default to traditional out of habit rather than necessity.

Common Objections And Honest Answers

These are the real questions product teams raise when considering AI user testing for the first time.

Is AI user testing good enough for real product decisions?

For iterative validation, prototype testing, and usability studies, yes. The behavioral data is real, the patterns are real, and the findings are actionable. The important caveat: for major strategic decisions, AI testing is best used as one input alongside human judgment and traditional research, not as a sole source of truth. Use it for the decisions that need to happen fast. Use traditional for the ones that can afford depth.

Traditional user testing takes too long, What are the practical alternatives?

AI user testing is the primary alternative for the majority of product validation use cases. For teams that need the speed of unmoderated testing with the depth of moderated, AI-moderated testing specifically closes that gap. The practical answer for most teams: use AI testing for continuous sprint-level validation, and run traditional moderated studies quarterly for the research that genuinely needs human depth.

We don't have a UX researcher, Can we still run AI user testing?

Yes. AI user testing is specifically designed to be accessible to non-researchers. The AI handles test plan creation, participant moderation, and initial analysis. A product manager can launch a meaningful study in under 20 minutes. That said, a researcher's input on what to test and how to interpret findings still adds real value. AI testing democratizes execution, not strategic research thinking.

Can't we just use NPS and product usage data instead of user testing?

These metrics tell you what is happening, not why. NPS tells you if users are happy. Usage data tells you what features are being used. Neither tells you what's confusing users, why they're abandoning a flow, or what they were actually trying to do when they clicked the wrong thing. The strongest product teams pair quantitative signals with qualitative observation, not one or the other.

The Decision Framework: Which Approach to Use and When

Ship Smarter and Cheaper With TheySaid

Most product teams believe in user testing. They just can't make it fit because the traditional process is too slow, too expensive, and too dependent on research bandwidth to run at the pace modern product development requires.

TheySaid changes that equation. Real participants moderated by AI. A 5M+ panel across 70+ languages. Automatic insight extraction, which means nobody watches hours of recordings. Findings in 24–48 hours, not three weeks. And pricing that starts free, no contract, no enterprise negotiation, no $50,000 annual commitment before you've seen a single result.

The teams winning in 2026 aren't testing less. They're testing smarter using AI user testing to validate every sprint and reserving traditional research for the decisions that genuinely need it. TheySaid makes the former fast enough to actually happen.

FAQs

What is the difference between AI user testing and unmoderated user testing?

Unmoderated testing gives participants a fixed script they complete tasks independently with no follow-up, and you review recordings manually. AI user testing is different because the AI actively moderates in real time, asking adaptive follow-up questions based on what each participant says and does. This gives AI testing the depth of moderated research at the speed of unmoderated at the scale of neither.

Can a product manager run AI user testing without a UX researcher?

Yes. AI user testing is designed to be accessible to non-researchers. The AI handles test plan creation, participant moderation, and initial analysis. A product manager can launch a 20-participant study in under 20 minutes. A researcher's input on research design and interpretation still adds significant value; AI testing democratizes execution, not strategic thinking.

Is AI user testing as accurate as traditional human-moderated testing?

For iterative validation, prototype testing, and usability studies, AI user testing produces accurate, actionable findings. The key limitation: AI analysis can miss subtle non-verbal cues and emotional nuance that a skilled human researcher catches in person. AI summaries should be spot-checked against source data for high-stakes decisions. For the majority of product validation use cases, sprint testing, pre-launch checks, and concept validation, AI testing is accurate and reliable.

.svg)